#bellogate - a Breakdown of the Spam.

This morning (9th October 2014) was not like any other morning. Usually I wake up and check my nightly email while having my breakfast. This morning, however, I awoke to my work email account having just fewer than 3,000 unread emails waiting for me. During the night someone on the UCL Students email list had worked out how to send an email from the provosts email account to the all students mailing list saying the single word “bello”. What’s unclear was that this email appeared to come from the Provost’s alias and no-one knows if the account was hacked (which would signal a breech of an account) or just some student on campus who knew how to spoof the email headers.

No one knows exactly what has happened, and this is only speculation, but what I think has happened is that the general mailing list for all students has been setup incorrectly allowing anyone with the email address to send to any message to the student body. Until an official statement has been announced we won’t know for certain.

Naturally my first reaction was to start to read all of these emails and see what was being said between the students to get an understanding of how they were using service. We had emails from students who were saying “hello” or “bello” in some cases, many students responded to the mailing list saying “Please remove my name from the list”. My favourite of all these emails were the mailing lists that the mailing list alias (the One Direction Fan Club and the along with a poem about the event:

There once was a hack with a bello

Done by a peculiar fellow

He sent it to all

You might even call

It a cry for a friendly hello

As of 9:30am the mailing list was closed down and an investigation is underway according to the @uclnews twitter account. @uclisd have done a great job keeping everyone notified even to the point of apologising to all the students via a text message to mitigate any concerns.

So what happens when you are researching ways to deal with unstructured textual data, have a toolkit, which collects data from various services and access to all the emails that were sent? Obviously you analyse the data! I quickly wrote some software to pull the data into the Big Data Toolkit and processed the data. I stripped out all identifying details such as email address and analysed only the date, time, subject heading and message body for information on what was being discussed. Below is a short breakdown of the data processed by my Big Data Toolkit.

The Data

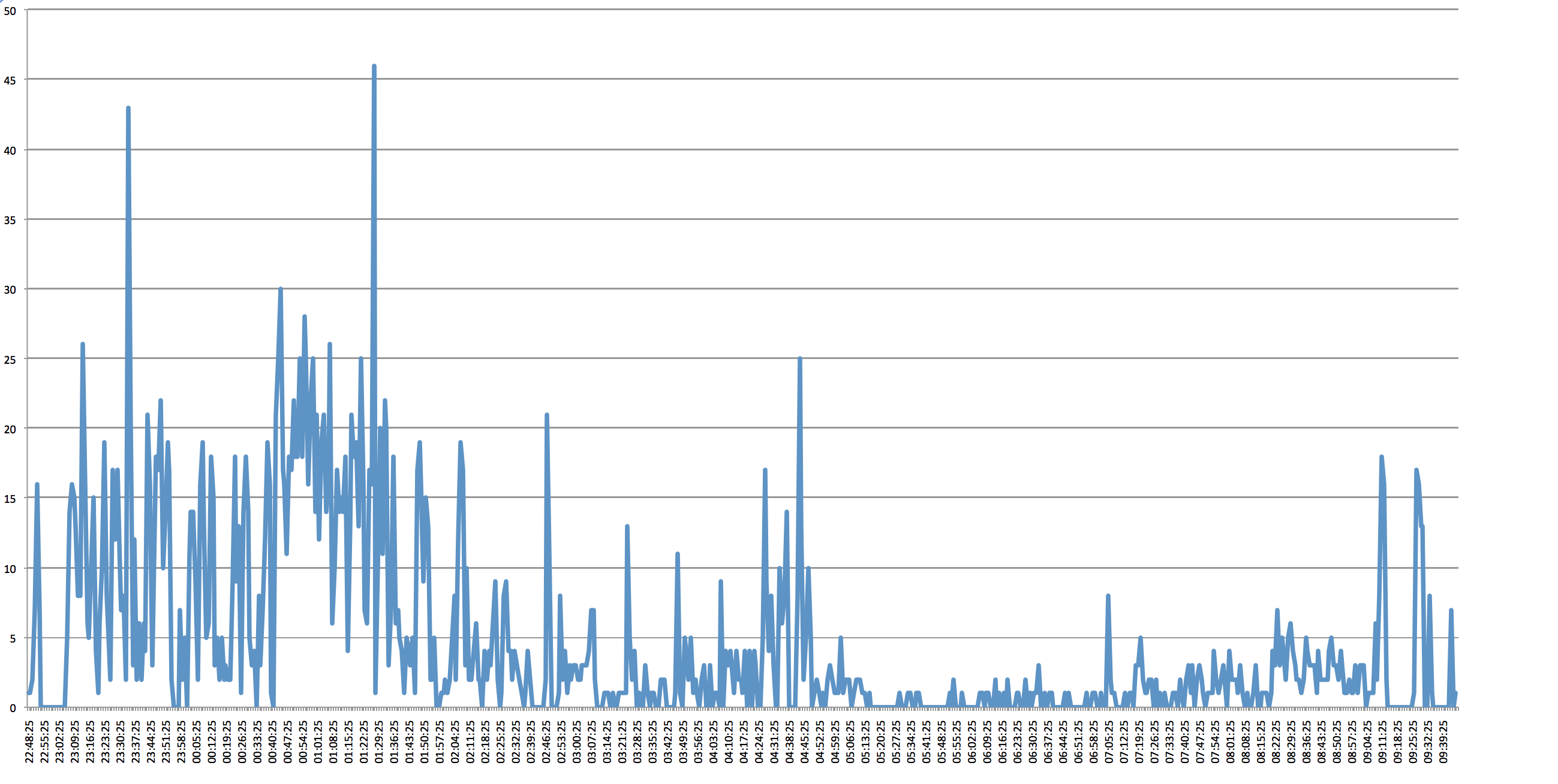

2,968 emails were sent out during the spam attack. Assuming that there is 26,000 students at UCL (from 2012 stats) then the total load on the email servers was 71,168,000 messages sent over a period of 11 hours.

First Email Sent: Wed Oct 08 2014 22:48:25 GMT+0100 (BST)

Last Email Sent: 09/10/2014 09:45:41 GMT+0100 (BST)

Total Period: 10 hours 57 minutes

Total Size of all 2,968 emails: 85.61 Mb

Total Data storage for all students: 2.226 Tb

Emails which were Subscriptions (Mailing Lists): 1,254

Distribution of sent messages (every minute)

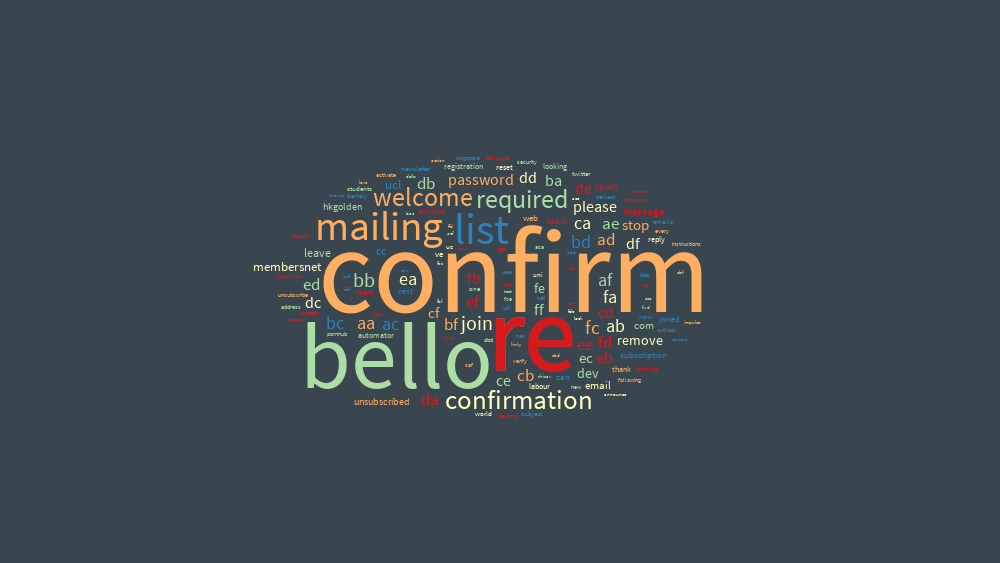

Textal of Subject Headers (view on textal.com)